We will be setting up a load balancer with Heartbeat and Ldirector on CentOS 6.

Note

Ldirectord has been removed from RHEL 6 default repository and replaced with Piranha.

As of RHEL 6.6, Red Hat provides support for HAProxy and keepalived in addition to the Piranha load balancing software.

Piranha has been removed from RHEL 7 default repository and replaced with HAProxy and keepalived.

Software

Software used in this article:

- CentOS 6.7

- Heartbeat 3.0.4

- Ldirectord 3.9.5

- Ipvsadm 1.26

Networking and IP Addresses

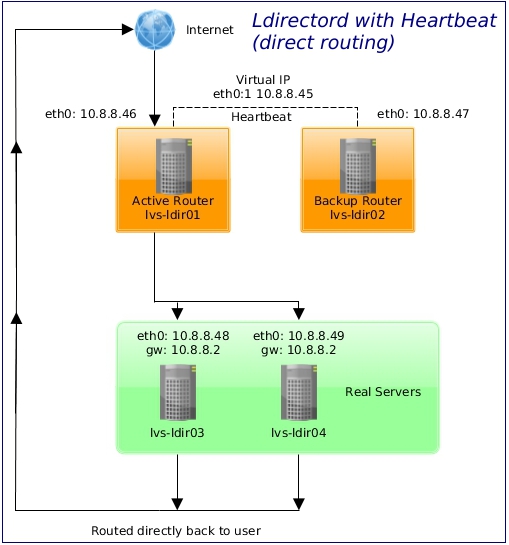

Our network is set up as follows:

- 10.8.8.0/24 – LAN with access to the Internet.

Hostnames and roles of the virtual machines we are going to use:

- lvs-ldir01 – the active ldirectord router with heartbeat,

- lvs-ldir02 – the backup ldirectord router with heartbeat,

- lvs-ldir03/lvs-ldir04 – real servers, both running a pre-configured Apache webserver.

See the schema below for more information.

Direct Routing Real Server Node Configuration

On each real server node (lvs-ldir03 and lvs-ldir04), run the following command for the VIP 10.8.8.45 and protocol combination intended to be serviced for the real server:

# iptables -t nat -A PREROUTING -p tcp -d 10.8.8.45 --dport 80 -j REDIRECT

The command above will cause the real servers to process packets destined for the VIP and port that they are given. Make sure that firewall changes are saved and restored after a restart:

# service iptables save

Ldirectord Setup

Linux Director Daemon (ldirectord) is a background process computer program used to monitor and administer real servers in the Linux Virtual Server (LVS) cluster. Ldirectord monitors the health of the real servers by periodically requesting a known URL and checking that the response contains an expected string.

While ldirectord is used to monitor and administer real servers in the LVS cluster, heartbeat is used as the fail-over monitor for the load balancers (ldirectord).

Installation

The ldirectord installation steps must be repeated on both routers, the lvs-ldir01 and the lvs-ldir02.

Install dependencies for ldirectord:

# yum install -y ipvsadm perl-libwww-perl perl-IO-Socket-INET6 perl-Net-SSLeay perl-MailTools

Ensure the IP Virtual Server Netfilter kernel module is loaded:

# modprobe ip_vs

A fairly old (2010-03-21) heartbeat-ldirectord v2.1.4 package was available from epel5 repositories, but it’s been removed now.

We are going to use an ldirectord v3.9.5 package which was released on 2013-07-26.

Download it from http://rpm.pbone.net/index.php3/stat/4/idpl/23860919/dir/centos_6/com/ldirectord-3.9.5-3.1.x86_64.rpm.html or alternatively get it here (direct link) and install.

# rpm -Uvh ldirectord-3.9.5-3.1.x86_64.rpm

Disable on boot as it will be managed by heartbeat:

# chkconfig ldirectord off

Configure

Create the /etc/ha.d/ldirectord.cf file and add the following content:

# Global Directives checkinterval = 5 checktimeout = 10 autoreload = no logfile = "/var/log/ldirectord.log" #logfile="local0" quiescent = yes # # virtual = x.y.z.w:p # protocol = tcp|udp # scheduler = rr|wrr|lc|wlc # real = x.y.z.w:p gate|masq|ipip [weight] # # Virtual Server for HTTP virtual = 10.8.8.45:80 real = 10.8.8.48:80 gate 1 real = 10.8.8.49:80 gate 1 service = http protocol = tcp request = "lbcheck.html" receive = "found" scheduler = wlc checktype = negotiate

The directives under “virtual=” have to start with a [TAB], not white space. The lbcheck.html file with the content of “found” must be present on the real webservers.

See “man ldirectord” for configuration directives not covered here.

Test ldirectord on the lvs-ldir01 router:

# /etc/init.d/ldirectord start

We should see something like this:

# ipvsadm -Ln IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.8.8.45:80 wlc -> 10.8.8.48:80 Route 1 0 0 -> 10.8.8.49:80 Route 1 0 0

Let us stop the ldirectord service:

# /etc/init.d/ldirectord stop

Heartbeat Setup

Heartbeat is a basic high-availability subsystem for Linux-HA.

Instalation

The heartbeat installation steps must be repeated on both routers, the lvs-ldir01 and the lvs-ldir02.

You can, for example, configure one router node and then rsync config to the other one:

# rsync -ah --progress /etc/ha.d/ [email protected]:/etc/ha.d/

Install heartbeat:

# yum install -y heartbeat

Enable on boot:

# chkconfig heartbeat on

Enable forwarding:

# sed -i 's/net.ipv4.ip_forward = 1/net.ipv4.ip_forward = 0' /etc/sysctl.conf # sysctl -p

Configuration

Create the /etc/ha.d/ha.cf file and add the following content:

logfile /var/log/ha.log logfacility local0 debug 0 # Disable the Pacemaker cluster manager # crm off|on|respawn crm off # Interval between heartbeat packets keepalive 2 # How quickly Heartbeat should decide that a node in a cluster is dead deadtime 6 # Which port Heartbeat should listen on udpport 694 # Which interfaces Heartbeat sends UDP broadcast traffic on bcast eth0 # Automatically fail back to a "primary" node (deprecated) auto_failback off # What machines are in the cluster. Use "uname -n" node lvs-ldir01.hl.local node lvs-ldir02.hl.local

Configure iptables firewall to allow heartbeat and HTTP traffic:

# iptables -A INPUT -s 10.8.8.0/24 -p udp --dports 694 -j ACCEPT # iptables -A INPUT -s 10.0.0.0/8 -p tcp --dports 80 -j ACCEPT

Save firewall rules:

# service iptables save

The ldirectored can be easily started and stopped by heartbeat. Put the ldirectord under the /etc/ha.d/resource.d/ directory, then we can add a line in the /etc/ha.d/haresources like:

lvs-ldir01.hl.local IPaddr::10.8.8.45 ldirectord::ldirectord.cf

Now, start the heartbeat service on both routers, the lvs-ldir01and the lvs-ldir02:

# /etc/init.d/heartbeat start

At this point, the virtual IP should be assigned to the eth0 interface on the primary router:

# ip ad show eth0

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 08:00:27:ff:46:00 brd ff:ff:ff:ff:ff:ff

inet 10.8.8.46/24 brd 10.8.8.255 scope global eth0

inet 10.8.8.45/24 brd 10.8.8.255 scope global secondary eth0

inet6 fe80::a00:27ff:feff:4600/64 scope link

valid_lft forever preferred_lft forever

# ipvsadm -Ln IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.8.8.45:80 wlc -> 10.8.8.48:80 Route 1 0 15 -> 10.8.8.49:80 Route 1 0 16

Failover Test

Stop the heartbear service on the primary router node lvs-ldir01:

[root@lvs-ldir01 ~]# /etc/init.d/heartbeat stop

The lvs-ldir02 node should become active:

[root@lvs-ldir02 ~]# ip ad show eth0

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 08:00:27:ff:47:00 brd ff:ff:ff:ff:ff:ff

inet 10.8.8.47/24 brd 10.8.8.255 scope global eth0

inet 10.8.8.45/24 brd 10.8.8.255 scope global secondary eth0

inet6 fe80::a00:27ff:feff:4700/64 scope link

valid_lft forever preferred_lft forever

Excerpts from the /var/log/ha.log:

lvs-ldir02.hl.local heartbeat: [4808]: info: mach_down takeover complete. lvs-ldir02.hl.local heartbeat: [4808]: WARN: node lvs-ldir01.hl.local: is dead lvs-ldir02.hl.local heartbeat: [4808]: info: Dead node lvs-ldir01.hl.local gave up resources. lvs-ldir02.hl.local heartbeat: [4808]: info: Link lvs-ldir01.hl.local:eth0 dead.

Now, if we start the heartbeat service on the lvs-ldir01 node, resources will be transferred from the lvs-ldir02 back to the lvs-ldir01 due to “auto_failback on”.

Troubleshooting

Check logs /var/log/ha.log and /var/log/ldirectord.log.

References

https://www.suse.com/communities/blog/load-balancing-howto-lvs-ldirectord-heartbeat-2/

http://www.linux-ha.org/doc/man-pages/re-hacf.html

http://www.linuxvirtualserver.org/docs/ha/heartbeat_ldirectord.html

Perfect.

Congratulations on the article.

Regards,

Danilo

You’re welcome.

cp /usr/share/doc/heartbeat-3.0.4/authkeys /etc/ha.d/authkeys

then uncomment last 4 lines and chmod 600

At least in my case I added this step.

awesome post!