I read an article about TrueNAS enabling container storage for Kubernetes by using the Democratic CSI driver to provide direct access to the storage system, and jumped right in.

Up until now I was using my own DIY NAS server to provide various services to the homelab environment, including NFS, which worked great to be honest with you, but it did not have a CSI.

Pre-requisites

We are using our Kubernetes homelab to deploy democratic-csi.

You will need a TrueNAS Core server. Note that installation of TrueNAS is beyond the scope of this article.

The Plan

In this article, we are going to do the following:

- Configure TrueNAS Core 12.0-U3 to provide NFS services.

- Configure democratic-csi for Kubernetes using Helm.

- Create Kubernetes persistent volumes.

The IP address of the TrueNAS server is 10.11.1.5.

Our Kubernetes nodes are pre-configured to use NFS, therefore no change is required. If you’re deploying a new set of CentOS servers, make sure to install the package nfs-utils.

Configure TrueNAS Core

A shout-out to Jonathan Gazeley and his blog post that helped me to get TrueNAS configured in no time.

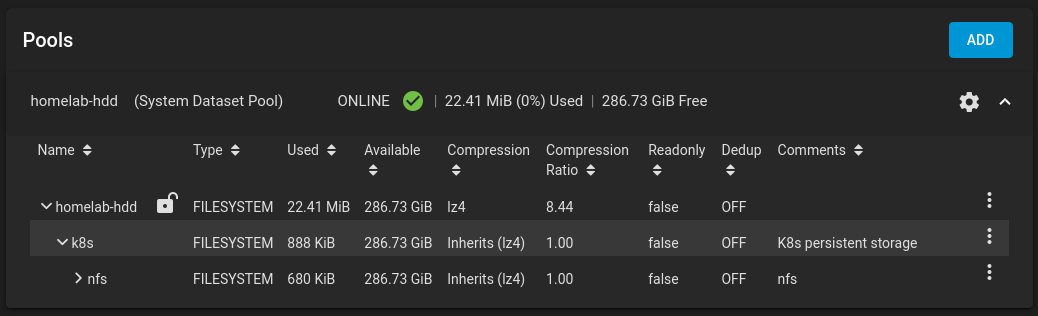

Create a Storage Pool

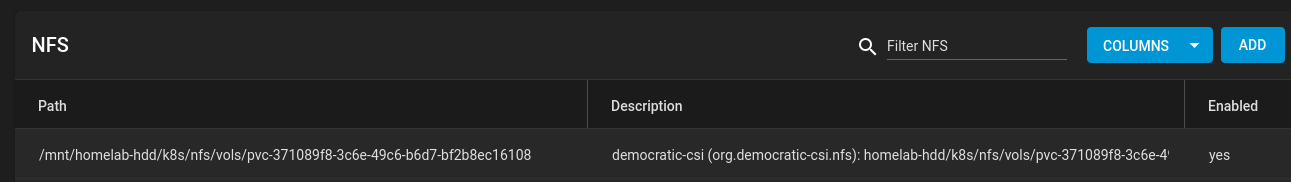

We’ve created a storage pool called homelab-hdd/k8s/nfs.

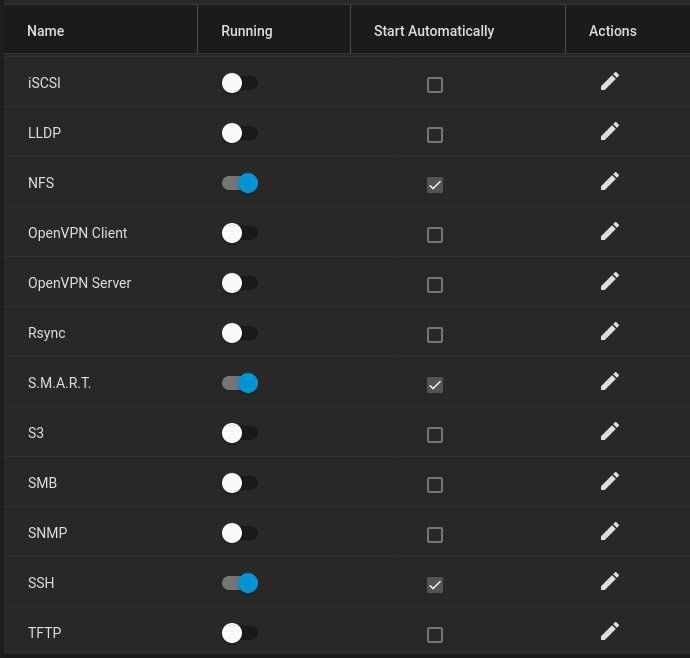

Enable NFS and SSH Services

We are interested in NFS and SSH, no other service is required. Note that S.M.A.R.T should be enabled by default.

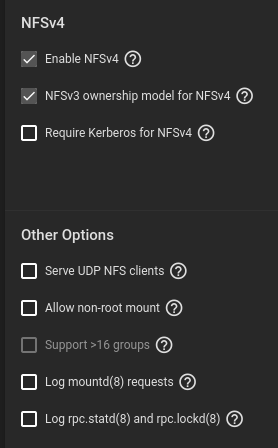

Configure NFS Service

Make sure to enable the following:

- Enable NFSv4.

- NFSv3 ownership model for NFSv4.

Configure SSH Passwordless Authentication

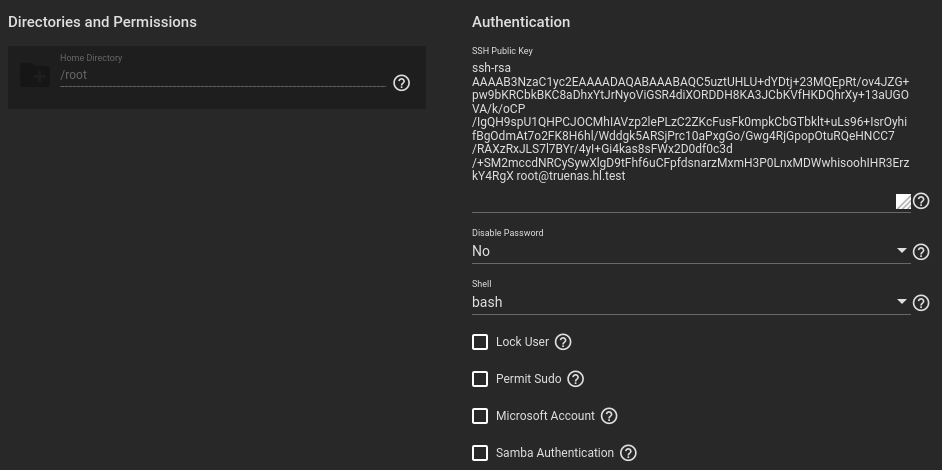

Kubernetes will require access to the TrueNAS API with a privileged user. For the homelab server, we will use the root user with passwordless authentication.

Generate an SSH keypair:

$ ssh-keygen -t rsa -C [email protected] -f truenas_rsa

$ cat ./truenas_rsa.pub ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC5uztUHLU+dYDtj+23MQEpRt/ov4JZG+pw9bKRCbkBKC8aDhxYtJrNyoViGSR4diXORDDH8KA3JCbKVfHKDQhrXy+13aUGOVA/k/oCP/IgQH9spU1QHPCJOCMhIAVzp2lePLzC2ZKcFusFk0mpkCbGTbklt+uLs96+IsrOyhifBgOdmAt7o2FK8H6hl/Wddgk5ARSjPrc10aPxgGo/Gwg4RjGpopOtuRQeHNCC7/RAXzRxJLS7l7BYr/4yI+Gi4kas8sFWx2D0df0c3d/+SM2mccdNRCySywXlgD9tFhf6uCFpfdsnarzMxmH3P0LnxMDWwhisoohIHR3ErzkY4RgX [email protected]

Navigate to Accounts > Users > root and add the public SSH key. Also change the shell to bash.

Verify that you can SSH into the TrueNAS server using the SSH key and the root account:

$ ssh -i ./truenas_rsa [email protected] Last login: Fri Apr 23 19:47:07 2021 FreeBSD 12.2-RELEASE-p6 f2858df162b(HEAD) TRUENAS TrueNAS (c) 2009-2021, iXsystems, Inc. All rights reserved. TrueNAS code is released under the modified BSD license with some files copyrighted by (c) iXsystems, Inc. For more information, documentation, help or support, go here: http://truenas.com Welcome to TrueNAS truenas#

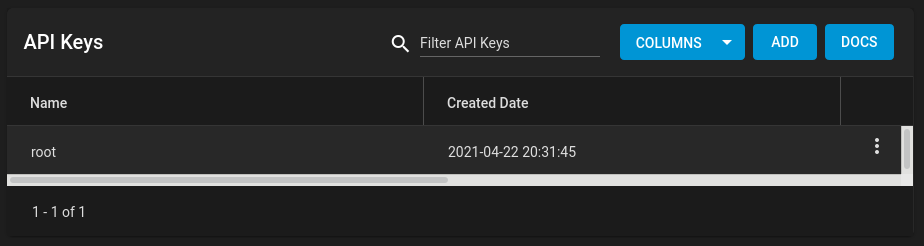

Generate a TrueNAS API Key

Navigate to settings (cog icon) > API Keys and generate a key. Give it some name, e.g. root. The key will be used to authenitcate with the TrueNAS HTTP server.

Configure Kubernetes democratic-csi

Helm Installation

$ helm repo add democratic-csi https://democratic-csi.github.io/charts/ $ helm repo update $ helm search repo democratic-csi/

Configure Helm Values File

The content of our file freenas-nfs.yaml can be seen below. Example configuration can be found in democratic-csi’s GitHub repository.

csiDriver:

name: "org.democratic-csi.nfs"

storageClasses:

- name: freenas-nfs-csi

defaultClass: false

reclaimPolicy: Retain

volumeBindingMode: Immediate

allowVolumeExpansion: true

parameters:

fsType: nfs

mountOptions:

- noatime

- nfsvers=4

secrets:

provisioner-secret:

controller-publish-secret:

node-stage-secret:

node-publish-secret:

controller-expand-secret:

driver:

config:

driver: freenas-nfs

instance_id:

httpConnection:

protocol: http

host: 10.11.1.5

port: 80

# This is the API key that we generated previously

apiKey: 1-fAP3JzEaXXLGyKam8ZnotarealkeyIKJ6nnKUX5ARd5v0pw0cADEkqnH1S079v

username: root

allowInsecure: true

apiVersion: 2

sshConnection:

host: 10.11.1.5

port: 22

username: root

# This is the SSH key that we generated for passwordless authentication

privateKey: |

-----BEGIN RSA PRIVATE KEY-----

MIIEogIBAAKCAQEAubs7VBy1PnWA7Y/ttzEBKUbf6L+CWRvqcPWykQm5ASgvGg4c

[...]

tl4biLpseFQgV3INtM0NNW4+LlTSAnjApDtNzttX/h5HTBLHyoc=

-----END RSA PRIVATE KEY-----

zfs:

# Make sure to use the storage pool that was created previously

datasetParentName: homelab-hdd/k8s/nfs/vols

detachedSnapshotsDatasetParentName: homelab-hdd/k8s/nfs/snaps

datasetEnableQuotas: true

datasetEnableReservation: false

datasetPermissionsMode: "0777"

datasetPermissionsUser: root

datasetPermissionsGroup: wheel

nfs:

shareHost: 10.11.1.5

shareAlldirs: false

shareAllowedHosts: []

shareAllowedNetworks: []

shareMaprootUser: root

shareMaprootGroup: wheel

shareMapallUser: ""

shareMapallGroup: ""

Install the democratic-csi Helm Chart

$ helm upgrade \ --install \ --create-namespace \ --values freenas-nfs.yaml \ --namespace democratic-csi \ zfs-nfs democratic-csi/democratic-csi

Verify that pods are up and running:

$ kubectl -n democratic-csi get pods NAME READY STATUS RESTARTS AGE zfs-nfs-democratic-csi-controller-5dbfcb7896-89tqv 4/4 Running 0 39h zfs-nfs-democratic-csi-node-6nz29 3/3 Running 0 39h zfs-nfs-democratic-csi-node-bdt47 3/3 Running 0 39h zfs-nfs-democratic-csi-node-c7p6h 3/3 Running 0 39h

$ kubectl get sc NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE freenas-nfs-csi org.democratic-csi.nfs Retain Immediate true 39h

Create Persistent Volumens

We are going to create a persistent volume for Grafana. See the content of the file grafana-pvc.yml below.

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-pvc-grafana

namespace: monitoring

labels:

app: grafana

annotations:

volume.beta.kubernetes.io/storage-class: "freenas-nfs-csi"

spec:

storageClassName: freenas-nfs-csi

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 500Mi

Create a persistent volume claim:

$ kubectl apply -f ./grafana-pvc.yml

Verify:

$ kubectl -n monitoring get pvc -l app=grafana NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE nfs-pvc-grafana Bound pvc-371089f8-3c6e-49c6-b6d7-bf2b8ec16108 500Mi RWO freenas-nfs-csi 18h

Related Posts

Add Second Disk to Existing TrueNAS Pool in Mirror Configuration (RAID1)

References

https://github.com/democratic-csi/democratic-csi

It’s nice,

and i follow the guide.

But at the end i get:

kubectl logs -n democratic-csi zfs-nfs-democratic-csi-controller-ffbb878db-w7ptj external-provisioner I0523 04:01:17.938148 1 feature_gate.go:243] feature gates: &{map[]} I0523 04:01:17.938209 1 csi-provisioner.go:132] Version: v2.1.0 I0523 04:01:17.938222 1 csi-provisioner.go:155] Building kube configs for running in cluster... I0523 04:01:17.954599 1 connection.go:153] Connecting to unix:///csi-data/csi.sock I0523 04:01:17.957244 1 common.go:111] Probing CSI driver for readiness I0523 04:01:17.957255 1 connection.go:182] GRPC call: /csi.v1.Identity/Probe I0523 04:01:17.957268 1 connection.go:183] GRPC request: {} I0523 04:01:19.033063 1 connection.go:185] GRPC response: {} I0523 04:01:19.033243 1 connection.go:186] GRPC error: rpc error: code = Internal desc = Error: connect EHOSTUNREACH 172.17.3.20:22 E0523 04:01:19.033271 1 csi-provisioner.go:193] CSI driver probe failed: rpc error: code = Internal desc = Error: connect EHOSTUNREACH 172.17.3.20:22Any idea whats could be wrong?

Hi, the error message suggests that the TrueNAS host in unreachable. Can you check the following:

1. Is this 172.17.3.20 the IP address of the TrueNAS server?

2. Is SSH service on the TrueNAS server enabled?

3. Is firewall on the TrueNAS server configured to allow incoming SSH traffic?

4. Can you SSH into the TrueNAS server as the root user from a Kubernetes host?

Hi Lisenet, everything works as indicated. Thank you.

quick question, what if I have two freenas/truenas and I want them both as PV for the cluster that way I do not have to put every deployments on one NFS server? what values should I replace on the freenas-nfs.yml? Appreciate your help.

Hi, thanks, I’m glad it worked for you.

May I ask what is it that you are trying to achieve? If you need high availability for storage, then I’d suggest to use multiple disks with TrueNAS in a RAID. If you need high availability for the NFS server, then you should use one that has two separate power circuits and two UPS systems, two network card with a bonded interface for redundancy etc.

If you want to use two instances of TrueNAS, then you will have to create two PVs, one on each storage array. Please see Kubernetes documentation for more info about PVs.

Hi Lisenet,

Thans very much for your guide, it worked well !

But I still have a problem. I tried to apply the pvc that we created as an “existingClaim” in a configuration file of mariadb, and I also indicated the StorageClass, but there was nothing applied to the excint pvc in the meanwhile, there was a new pvc pending…

Perhaps you can help me with that ?

Hello everyone

Thank you for the documentation! Im stuck with container creation. The pods are always in a state of “ContainerCreating”.

seaser@homeserver03:~$ kubectl -n democratic-csi get pods

NAME READY STATUS RESTARTS AGE

zfs-nfs-democratic-csi-node-q8pfr 0/3 ContainerCreating 0 4m36s

zfs-nfs-democratic-csi-node-z4vgd 0/3 ContainerCreating 0 4m36s

zfs-nfs-democratic-csi-node-rgqzp 0/3 ContainerCreating 0 4m36s

zfs-nfs-democratic-csi-controller-5c4f449c6d-mjv2k 4/4 Running 0 4m36s

I0622 05:39:36.845632 1 csi-provisioner.go:132] Version: v2.1.0

I0622 05:39:36.845649 1 csi-provisioner.go:155] Building kube configs for running in cluster…

I0622 05:39:36.853723 1 connection.go:153] Connecting to unix:///csi-data/csi.sock

I0622 05:39:39.942724 1 common.go:111] Probing CSI driver for readiness

I0622 05:39:39.942739 1 connection.go:182] GRPC call: /csi.v1.Identity/Probe

I0622 05:39:39.942743 1 connection.go:183] GRPC request: {}

I0622 05:39:40.053698 1 connection.go:185] GRPC response: {“ready”:{“value”:true}}

I0622 05:39:40.053801 1 connection.go:186] GRPC error:

I0622 05:39:40.053809 1 connection.go:182] GRPC call: /csi.v1.Identity/GetPluginInfo

I0622 05:39:40.053812 1 connection.go:183] GRPC request: {}

I0622 05:39:40.056092 1 connection.go:185] GRPC response: {“name”:”org.democratic-csi.nfs”,”vendor_version”:”1.2.0″}

I0622 05:39:40.056134 1 connection.go:186] GRPC error:

I0622 05:39:40.056142 1 csi-provisioner.go:202] Detected CSI driver org.democratic-csi.nfs

I0622 05:39:40.056151 1 connection.go:182] GRPC call: /csi.v1.Identity/GetPluginCapabilities

I0622 05:39:40.056158 1 connection.go:183] GRPC request: {}

I0622 05:39:40.059424 1 connection.go:185] GRPC response: {“capabilities”:[{“Type”:{“Service”:{“type”:1}}},{“Type”:{“VolumeExpansion”:{“type”:1}}}]}

I0622 05:39:40.059558 1 connection.go:186] GRPC error:

I0622 05:39:40.059568 1 connection.go:182] GRPC call: /csi.v1.Controller/ControllerGetCapabilities

I0622 05:39:40.059573 1 connection.go:183] GRPC request: {}

I0622 05:39:40.061972 1 connection.go:185] GRPC response: {“capabilities”:[{“Type”:{“Rpc”:{“type”:1}}},{“Type”:{“Rpc”:{“type”:3}}},{“Type”:{“Rpc”:{“type”:4}}},{“Type”:{“Rpc”:{“type”:5}}},{“Type”:{“Rpc”:{“type”:6}}},{“Type”:{“Rpc”:{“type”:7}}},{“Type”:{“Rpc”:{“type”:9}}}]}

I0622 05:39:40.062058 1 connection.go:186] GRPC error:

I0622 05:39:40.062111 1 csi-provisioner.go:244] CSI driver does not support PUBLISH_UNPUBLISH_VOLUME, not watching VolumeAttachments

I0622 05:39:40.062779 1 controller.go:753] Using saving PVs to API server in background

I0622 05:39:40.063639 1 leaderelection.go:243] attempting to acquire leader lease democratic-csi/org-democratic-csi-nfs…

Any advice how to troubleshoot this issue?

Could you post the deployment and daemon set logs?

Hi Lisenet,

It was working fine for me but for the past few days now I have been getting the following error in the logs:

I0728 14:48:13.040870 1 feature_gate.go:243] feature gates: &{map[]}

I0728 14:48:13.040926 1 csi-provisioner.go:138] Version: v2.2.2

I0728 14:48:13.040951 1 csi-provisioner.go:161] Building kube configs for running in cluster…

I0728 14:48:13.048174 1 connection.go:153] Connecting to unix:///csi-data/csi.sock

I0728 14:48:13.048711 1 common.go:111] Probing CSI driver for readiness

I0728 14:48:13.048734 1 connection.go:182] GRPC call: /csi.v1.Identity/Probe

I0728 14:48:13.048740 1 connection.go:183] GRPC request: {}

I0728 14:48:13.050782 1 connection.go:185] GRPC response: {}

I0728 14:48:13.051093 1 connection.go:186] GRPC error: rpc error: code = Unavailable desc = Bad Gateway: HTTP status code 502; transport: missing content-type field

E0728 14:48:13.051126 1 csi-provisioner.go:203] CSI driver probe failed: rpc error: code = Unavailable desc = Bad Gateway: HTTP status code 502; transport: missing content-type field

Hi Adeel, have you made any changes to your config at all? Are you using SSH password or key? Are you using root?

Check TrueNASs log

/var/log/auth.log.hey, i have the same error

have you found any solution to it?

I solve this problem

https://github.com/democratic-csi/democratic-csi/issues/200#issuecomment-1340625770

GREAT walk through! Worked perfectly…however, how do I add ANOTHER storage class using a different disk. I created a 2nd pool for a different disk array…so do I create ANOTHER helm chart or can I update the original and just append it with a new ZFS and NFS block with a different path?

Thanks Eric. While I’ve not tried this, but I think you should be able to define it in the same Helm chart under

storageClasses:. Make sure to give it a different name though.Hi Lisenet it’s not working

freenas-nfs.yaml -------- csiDriver: name: "org.democratic-csi.nfs" storageClasses: - name: freenas-nfs-csi defaultClass: false reclaimPolicy: Delete volumeBindingMode: Immediate allowVolumeExpansion: true parameters: fsType: nfs mountOptions: - noatime - nfsvers=4 secrets: provisioner-secret: controller-publish-secret: node-stage-secret: node-publish-secret: controller-expand-secret: driver: config: driver: freenas-nfs instance_id: httpConnection: protocol: http host: 192.168.30.13 port: 80 username: root password: "password" allowInsecure: true sshConnection: host: 192.168.30.13 port: 22 username: root # use either password or key password: "pwassword" zfs: datasetParentName: default/k8s/nfs/v #pool/dataset/dataset/dataset/dataset detachedSnapshotsDatasetParentName: default/k8s/nfs/s #pool/dataset/dataset/dataset/dataset datasetEnableQuotas: true datasetEnableReservation: false datasetPermissionsMode: "0777" datasetPermissionsUser: root datasetPermissionsGroup: wheel nfs: shareHost: 192.168.30.13 shareAlldirs: false shareAllowedHosts: [] shareAllowedNetworks: [] shareMaprootUser: root shareMaprootGroup: wheel shareMapallUser: "" shareMapallGroup: ""– truenas ssh connection test

– freenas NFS Service settings

$ k describe po/zfs-nfs-democratic-csi-controller-6db5558c48-fp9n2 -n democratic-csi Name: zfs-nfs-democratic-csi-controller-6db5558c48-fp9n2 Namespace: democratic-csi Priority: 0 Node: kube-node03/192.168.30.63 Start Time: Mon, 24 Oct 2022 13:41:49 +0900 Labels: app.kubernetes.io/component=controller-linux app.kubernetes.io/csi-role=controller app.kubernetes.io/instance=zfs-nfs app.kubernetes.io/managed-by=Helm app.kubernetes.io/name=democratic-csi pod-template-hash=6db5558c48 Annotations: checksum/configmap: 4c738d703c418eb6a75fa3a097249ef9bf02c0678a385c5bb41bd6c2416beef9 checksum/secret: a31e63d7cfb6b568f3328d3ad9e3a71ee33725559a9f2dc5c64a1cfe601f4ffd cni.projectcalico.org/containerID: 7ea282f3f6c20e2b814d6154e219e6166b7b617df4d279eb749a54487d549df1 cni.projectcalico.org/podIP: 192.168.161.46/32 cni.projectcalico.org/podIPs: 192.168.161.46/32 Status: Running IP: 192.168.161.46 IPs: IP: 192.168.161.46 Controlled By: ReplicaSet/zfs-nfs-democratic-csi-controller-6db5558c48 Containers: external-provisioner: Container ID: docker://db54ec6363dfeed2118d347dea9969ba2e2e844ca85af7eee867d0e5d732800b Image: k8s.gcr.io/sig-storage/csi-provisioner:v3.1.0 Image ID: docker-pullable://k8s.gcr.io/sig-storage/csi-provisioner@sha256:122bfb8c1edabb3c0edd63f06523e6940d958d19b3957dc7b1d6f81e9f1f6119 Port: Host Port: Args: --v=5 --leader-election --leader-election-namespace=democratic-csi --timeout=90s --worker-threads=10 --extra-create-metadata --csi-address=/csi-data/csi.sock State: Waiting Reason: CrashLoopBackOff Last State: Terminated Reason: Error Exit Code: 1 Started: Mon, 24 Oct 2022 13:48:45 +0900 Finished: Mon, 24 Oct 2022 13:48:45 +0900 Ready: False Restart Count: 6 Environment: NODE_NAME: (v1:spec.nodeName) NAMESPACE: democratic-csi (v1:metadata.namespace) POD_NAME: zfs-nfs-democratic-csi-controller-6db5558c48-fp9n2 (v1:metadata.name) Mounts: /csi-data from socket-dir (rw) /var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-dsq8c (ro) external-resizer: Container ID: docker://0a129107c481e5630a58a2ee0c4c8fe8e0e91bfd4108d1f3b399c9f174792179 Image: k8s.gcr.io/sig-storage/csi-resizer:v1.4.0 Image ID: docker-pullable://k8s.gcr.io/sig-storage/csi-resizer@sha256:9ebbf9f023e7b41ccee3d52afe39a89e3ddacdbb69269d583abfc25847cfd9e4 Port: Host Port: Args: --v=5 --leader-election --leader-election-namespace=democratic-csi --timeout=90s --workers=10 --csi-address=/csi-data/csi.sock State: Waiting Reason: CrashLoopBackOff Last State: Terminated Reason: Error Exit Code: 255 Started: Mon, 24 Oct 2022 13:48:45 +0900 Finished: Mon, 24 Oct 2022 13:48:45 +0900 Ready: False Restart Count: 6 Environment: NODE_NAME: (v1:spec.nodeName) NAMESPACE: democratic-csi (v1:metadata.namespace) POD_NAME: zfs-nfs-democratic-csi-controller-6db5558c48-fp9n2 (v1:metadata.name) Mounts: /csi-data from socket-dir (rw) /var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-dsq8c (ro) external-snapshotter: Container ID: docker://689c7ad05a56cd045cc467f928e8148a8557d803df88fb8f5a328fde09d26d35 Image: k8s.gcr.io/sig-storage/csi-snapshotter:v5.0.1 Image ID: docker-pullable://k8s.gcr.io/sig-storage/csi-snapshotter@sha256:89e900a160a986a1a7a4eba7f5259e510398fa87ca9b8a729e7dec59e04c7709 Port: Host Port: Args: --v=5 --leader-election --leader-election-namespace=democratic-csi --timeout=90s --worker-threads=10 --csi-address=/csi-data/csi.sock State: Waiting Reason: CrashLoopBackOff Last State: Terminated Reason: Error Exit Code: 1 Started: Mon, 24 Oct 2022 13:48:45 +0900 Finished: Mon, 24 Oct 2022 13:48:45 +0900 Ready: False Restart Count: 6 Environment: NODE_NAME: (v1:spec.nodeName) NAMESPACE: democratic-csi (v1:metadata.namespace) POD_NAME: zfs-nfs-democratic-csi-controller-6db5558c48-fp9n2 (v1:metadata.name) Mounts: /csi-data from socket-dir (rw) /var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-dsq8c (ro) csi-driver: Container ID: docker://310f97ba3898a8e020a082cbeeba3a62a8e3a6c75be1088a3984d4e6bf8da5f3 Image: docker.io/democraticcsi/democratic-csi:latest Image ID: docker-pullable://democraticcsi/democratic-csi@sha256:9633b08bf21d93dec186e8c4b7a39177fb6d59fd4371c88700097b9cc0aa4712 Port: Host Port: Args: --csi-version=1.5.0 --csi-name=org.democratic-csi.nfs --driver-config-file=/config/driver-config-file.yaml --log-level=info --csi-mode=controller --server-socket=/csi-data/csi.sock.internal State: Running Started: Mon, 24 Oct 2022 13:48:48 +0900 Last State: Terminated Reason: Error Exit Code: 1 Started: Mon, 24 Oct 2022 13:46:05 +0900 Finished: Mon, 24 Oct 2022 13:47:13 +0900 Ready: True Restart Count: 5 Liveness: exec [bin/liveness-probe --csi-version=1.5.0 --csi-address=/csi-data/csi.sock.internal] delay=10s timeout=15s period=60s #success=1 #failure=3 Environment: NODE_EXTRA_CA_CERTS: /tmp/certs/extra-ca-certs.crt Mounts: /config from config (rw) /csi-data from socket-dir (rw) /tmp/certs from extra-ca-certs (rw) /var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-dsq8c (ro) csi-proxy: Container ID: docker://4fd59cc00d890dcbd4998a690578ebb064bd42e8cbf4b7df1b3163e95c0efef1 Image: docker.io/democraticcsi/csi-grpc-proxy:v0.5.3 Image ID: docker-pullable://democraticcsi/csi-grpc-proxy@sha256:4d65ca1cf17d941a8df668b8fe2f1c0cfa512c8b0dbef3ff89a4cd405e076923 Port: Host Port: State: Running Started: Mon, 24 Oct 2022 13:41:55 +0900 Ready: True Restart Count: 0 Environment: BIND_TO: unix:///csi-data/csi.sock PROXY_TO: unix:///csi-data/csi.sock.internal Mounts: /csi-data from socket-dir (rw) /var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-dsq8c (ro) Conditions: Type Status Initialized True Ready False ContainersReady False PodScheduled True Volumes: socket-dir: Type: EmptyDir (a temporary directory that shares a pod's lifetime) Medium: SizeLimit: config: Type: Secret (a volume populated by a Secret) SecretName: zfs-nfs-democratic-csi-driver-config Optional: false extra-ca-certs: Type: ConfigMap (a volume populated by a ConfigMap) Name: zfs-nfs-democratic-csi Optional: false kube-api-access-dsq8c: Type: Projected (a volume that contains injected data from multiple sources) TokenExpirationSeconds: 3607 ConfigMapName: kube-root-ca.crt ConfigMapOptional: DownwardAPI: true QoS Class: BestEffort Node-Selectors: kubernetes.io/os=linux Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s node.kubernetes.io/unreachable:NoExecute op=Exists for 300s Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 7m54s default-scheduler Successfully assigned democratic-csi/zfs-nfs-democratic-csi-controller-6db5558c48-fp9n2 to kube-node03 Normal Pulling 7m52s kubelet Pulling image "docker.io/democraticcsi/democratic-csi:latest" Normal Created 7m48s kubelet Created container csi-proxy Normal Pulled 7m48s kubelet Container image "docker.io/democraticcsi/csi-grpc-proxy:v0.5.3" already present on machine Normal Started 7m48s kubelet Started container csi-driver Normal Created 7m48s kubelet Created container csi-driver Normal Pulled 7m48s kubelet Successfully pulled image "docker.io/democraticcsi/democratic-csi:latest" in 4.198052133s Normal Started 7m48s kubelet Started container csi-proxy Normal Started 7m25s (x2 over 7m52s) kubelet Started container external-snapshotter Normal Created 7m25s (x2 over 7m53s) kubelet Created container external-snapshotter Normal Pulled 7m25s (x2 over 7m53s) kubelet Container image "k8s.gcr.io/sig-storage/csi-snapshotter:v5.0.1" already present on machine Normal Started 7m25s (x2 over 7m53s) kubelet Started container external-resizer Normal Created 7m25s (x2 over 7m53s) kubelet Created container external-resizer Normal Pulled 7m25s (x2 over 7m53s) kubelet Container image "k8s.gcr.io/sig-storage/csi-resizer:v1.4.0" already present on machine Normal Started 7m25s (x2 over 7m53s) kubelet Started container external-provisioner Normal Created 7m25s (x2 over 7m53s) kubelet Created container external-provisioner Normal Pulled 7m25s (x2 over 7m53s) kubelet Container image "k8s.gcr.io/sig-storage/csi-provisioner:v3.1.0" already present on machine Warning BackOff 2m41s (x24 over 7m21s) kubelet Back-off restarting failed container– kube-master node log

Hi, can you provide container logs please?

thank you Lisenet

my k8s cluster is calico-cni 192.168.0.0/16

Truenas is 192.168.30.13

it’s my fault

k8s 192.168.30.0/24 , Truenas 10.10.10.0/24 (Network Segmentation)

Now working !!!

$ k get po -n democratic-csi

NAME READY STATUS RESTARTS AGE

zfs-nfs-democratic-csi-controller-55cb498d9c-d95sr 5/5 Running 0 22m

zfs-nfs-democratic-csi-node-6rmrg 4/4 Running 0 22m

zfs-nfs-democratic-csi-node-7rfqf 4/4 Running 0 22m

zfs-nfs-democratic-csi-node-qk7vv 4/4 Running 0 22m

$ k get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

test-claim-nfs Bound pvc-1dcf25e6-9c19-48c6-9262-5f1c8ce66cc5 1Gi RWO freenas-nfs-csi 10m

Oh, overlapping network ranges, I see. No worries, I’m glad you sorted it out.

Pods crashed with mysterious errors – ‘ dig a lot of digging, and found it started working when I used “OPENSSH” instead of “RSA” in the cert-part of the config, as below.

“`

—–BEGIN OPENSSH PRIVATE KEY—–

…..

—–END OPENSSH PRIVATE KEY—–

“`

Sample error I encountered was `GRPC error: rpc error: code = Unavailable desc = unexpected HTTP status code received from server: 502 (Bad Gateway); malformed header: missing HTTP content-type`.

‘ hope it helps anyone with the same problem.

And one more – datasetPermissionsUser and datasetPermissionsGroup must both be 0 now, see https://github.com/democratic-csi/democratic-csi/blob/master/CHANGELOG.md#v153.

“`

zfs:

…..

datasetPermissionsUser: 0

datasetPermissionsGroup: 0

nfs:

…..

shareMaprootUser: 0

shareMaprootGroup: 0

“`

Not sure what’s going on, but the node pods are all stuck in ContainerCreating (stuck there for hours). The controller pod is running so I’m not sure what I should be looking at.

Check the latest events in the K8s cluster to see if anything’s been logged:

Also check

kubeletsystemd service logs:Check daemonset logs:

Dump pod and container logs as well if at all possible:

kubectl describe pods ${POD_NAME} kubectl logs ${POD_NAME} crictl logs ${CONTAINER_ID}Check TrueNAS access logs.

I figured it out. I had set the wrong path for kubelet. I was setting that to the kubelet binary instead of /var/lib/kubelet

Hi I’m running into an issue with the controller continually being restarted & going into crashLoopBackOff. I’ve reinstalled the chart cleanly a couple times. Also installed it w/ the raw yaml after doing a helm template & double checking that none of the values are coming out strangely. Any idea what’s going on here? Hopefully i’m missing something obvious

dave@nomad:~/code/home_lab/zfs$ kubectl get all -n democratic-csi

NAME READY STATUS RESTARTS AGE

pod/zfs-nfs-democratic-csi-controller-544f7b74cc-t54t9 2/5 CrashLoopBackOff 21 (15s ago) 9m17s

pod/zfs-nfs-democratic-csi-node-6cwf7 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-6x9k4 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-c9zf8 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-d4xdh 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-gccv2 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-h42sv 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-mm2nz 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-mp8rf 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-phwzj 4/4 Running 0 9m17s

pod/zfs-nfs-democratic-csi-node-wfdh2 4/4 Running 0 9m17s

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

daemonset.apps/zfs-nfs-democratic-csi-node 10 10 10 10 10 kubernetes.io/os=linux 9m17s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/zfs-nfs-democratic-csi-controller 0/1 1 0 9m17s

NAME DESIRED CURRENT READY AGE

replicaset.apps/zfs-nfs-democratic-csi-controller-544f7b74cc 1 1 0 9m17s

—–

Here are the logs from the controller, doesn’t matter which container in the pod I dump the logs for, same error:

dryhn@nomad:~/code/home_lab/zfs$ kubectl logs pod/zfs-nfs-democratic-csi-controller-544f7b74cc-t54t9 -c external-provisioner -n democratic-csi

I0325 18:14:00.864907 1 feature_gate.go:245] feature gates: &{map[]}

I0325 18:14:00.865156 1 csi-provisioner.go:139] Version: v3.1.0

I0325 18:14:00.865179 1 csi-provisioner.go:162] Building kube configs for running in cluster…

I0325 18:14:00.867427 1 connection.go:154] Connecting to unix:///csi-data/csi.sock

I0325 18:14:00.869143 1 common.go:111] Probing CSI driver for readiness

I0325 18:14:00.869197 1 connection.go:183] GRPC call: /csi.v1.Identity/Probe

I0325 18:14:00.869217 1 connection.go:184] GRPC request: {}

I0325 18:14:00.897279 1 connection.go:186] GRPC response: {}

I0325 18:14:00.897553 1 connection.go:187] GRPC error: rpc error: code = Internal desc = TypeError: Cannot read properties of undefined (reading ‘replace’) TypeError: Cannot read properties of undefined (reading ‘replace’)

at FreeNASSshDriver.getDetachedSnapshotParentDatasetName (/home/csi/app/src/driver/controller-zfs/index.js:190:43)

at FreeNASSshDriver.Probe (/home/csi/app/src/driver/controller-zfs/index.js:552:14)

at requestHandlerProxy (/home/csi/app/bin/democratic-csi:217:49)

at Object.Probe (/home/csi/app/bin/democratic-csi:290:7)

at /home/csi/app/node_modules/@grpc/grpc-js/build/src/server.js:821:17

at Http2ServerCallStream.safeDeserializeMessage (/home/csi/app/node_modules/@grpc/grpc-js/build/src/server-call.js:395:13)

at ServerHttp2Stream.onEnd (/home/csi/app/node_modules/@grpc/grpc-js/build/src/server-call.js:382:22)

at ServerHttp2Stream.emit (node:events:525:35)

at endReadableNT (node:internal/streams/readable:1358:12)

at processTicksAndRejections (node:internal/process/task_queues:83:21)

E0325 18:14:00.897634 1 csi-provisioner.go:197] CSI driver probe failed: rpc error: code = Internal desc = TypeError: Cannot read properties of undefined (reading ‘replace’) TypeError: Cannot read properties of undefined (reading ‘replace’)

at FreeNASSshDriver.getDetachedSnapshotParentDatasetName (/home/csi/app/src/driver/controller-zfs/index.js:190:43)

at FreeNASSshDriver.Probe (/home/csi/app/src/driver/controller-zfs/index.js:552:14)

at requestHandlerProxy (/home/csi/app/bin/democratic-csi:217:49)

at Object.Probe (/home/csi/app/bin/democratic-csi:290:7)

at /home/csi/app/node_modules/@grpc/grpc-js/build/src/server.js:821:17

at Http2ServerCallStream.safeDeserializeMessage (/home/csi/app/node_modules/@grpc/grpc-js/build/src/server-call.js:395:13)

at ServerHttp2Stream.onEnd (/home/csi/app/node_modules/@grpc/grpc-js/build/src/server-call.js:382:22)

at ServerHttp2Stream.emit (node:events:525:35)

at endReadableNT (node:internal/streams/readable:1358:12)

at processTicksAndRejections (node:internal/process/task_queues:83:21)

I figured it out… Silly problem… I’m new to TrueNas all together. I’m using the latest (Version: TrueNAS-13.0-U4) and couldn’t create datasets. In fact I couldn’t see the ellipses next to the pool at all. I couldn’t find any tutorials for this version so I thought they had been removed or something. Turns out, because I need glasses/can’t see really well & use Chrome’s zoom feature, the ellipses that lead to dataset creation were pushed off the screen & I didn’t notice. After getting that figured out /facePalm, the notes from @zyc were very handy because that was the next issue on deck. Things appear to be working fine now. Thanks!

Hi Lisenet:

I am getting below errors on controller pods for ISCSI/NFS. I am suspecting something on calico-CNI side but unclear as to what might be causing this. Any help would be appreciated

Lab Setup is vmware sandboxed env. k8s cluster on

FreeNas setup external to env, 172.19.x.x.

–All nodes and master have additional mgmt nic with uplink to net so can successfully ssh [email protected].x.x to get to FREENAS

–Leveraging root/password and not API within the freenas-nfs/freenas-iscsi.yamls

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

zfs-iscsi-democratic-csi-controller-784db9f86d-29xg2 1/6 CrashLoopBackOff 1015 (32s ago) 16h 10.40.0.x k8s-worker1.virtual.lab

zfs-iscsi-democratic-csi-node-ms6vg 4/4 Running 0 16h 192.168.a.x k8s-worker2.virtual.lab

zfs-iscsi-democratic-csi-node-ndcmd 4/4 Running 0 16h 192.168.a.y k8s-worker3.virtual.lab

zfs-iscsi-democratic-csi-node-vhfqj 4/4 Running 0 16h 192.168.a.z k8s-worker1.virtual.lab

zfs-nfs-democratic-csi-controller-df5866c7d-bn5rn 0/6 ContainerCreating 0 16h k8s-worker2.virtual.lab

zfs-nfs-democratic-csi-node-2lpqg 4/4 Running 0 16h 192.168.a.z k8s-worker3.virtual.lab

zfs-nfs-democratic-csi-node-jb26s 4/4 Running 0 16h 192.168.a.x k8s-worker1.virtual.lab

zfs-nfs-democratic-csi-node-qdx9g 4/4 Running 0 16h 192.168.a.y k8s-worker2.virtual.lab

–kubectl describe pod results:

ISCSI controller:

Name: zfs-iscsi-democratic-csi-controller-784db9f86d-29xg2

Namespace: democratic-csi

Priority: 0

Service Account: zfs-iscsi-democratic-csi-controller-sa

Node: k8s-worker1.virtual.lab/192.168.a.x

Start Time: Mon, 09 Oct 2023 15:18:31 -0400

Labels: app.kubernetes.io/component=controller-linux

app.kubernetes.io/csi-role=controller

app.kubernetes.io/instance=zfs-iscsi

app.kubernetes.io/managed-by=Helm

app.kubernetes.io/name=democratic-csi

pod-template-hash=784db9f86d

Annotations: checksum/configmap: a8a5e1812efe56f6d3feb5b1497b6c0bac129fd7e9ba36203e5137727baed06f

checksum/secret: 2a0ba4b14b89ac6dc697be733fea109e95aee62abaa41cb6c4bb1b9b2ca035fb

Status: Running

IP: 10.40.0.x

IPs:

IP: 10.40.0.x

Controlled By: ReplicaSet/zfs-iscsi-democratic-csi-controller-784db9f86d

Containers:

external-attacher:

Container ID: containerd://3936e52b16a890369321fa574d519deb29f5547aa7a4d307a5bfbed898f0f545

Image: registry.k8s.io/sig-storage/csi-attacher:v4.3.0

Image ID: registry.k8s.io/sig-storage/csi-attacher@sha256:4eb73137b66381b7b5dfd4d21d460f4b4095347ab6ed4626e0199c29d8d021af

Port:

Host Port:

Args:

–v=5

–leader-election

–leader-election-namespace=democratic-csi

–timeout=90s

–worker-threads=10

–csi-address=/csi-data/csi.sock

State: Waiting

Reason: CrashLoopBackOff

Last State: Terminated

Reason: Error

Exit Code: 1

Started: Tue, 10 Oct 2023 08:12:49 -0400

Finished: Tue, 10 Oct 2023 08:12:49 -0400

Ready: False

Restart Count: 203

Environment:

Mounts:

/csi-data from socket-dir (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-5b8cw (ro)

external-provisioner:

Container ID: containerd://de01d1fc72a4527e02024a5733bc0c1bc0bf643d0b7b000ae090ea80250f7ee2

Image: registry.k8s.io/sig-storage/csi-provisioner:v3.5.0

Image ID: registry.k8s.io/sig-storage/csi-provisioner@sha256:d078dc174323407e8cc6f0f9abd4efaac5db27838f1564d0253d5e3233e3f17f

Port:

Host Port:

Args:

–v=5

–leader-election

–leader-election-namespace=democratic-csi

–timeout=90s

–worker-threads=10

–extra-create-metadata

–csi-address=/csi-data/csi.sock

State: Waiting

Reason: CrashLoopBackOff

Last State: Terminated

Reason: Error

Exit Code: 1

Started: Tue, 10 Oct 2023 08:12:49 -0400

Finished: Tue, 10 Oct 2023 08:12:49 -0400

Ready: False

Restart Count: 203

…..

…..

….

Events:

Type Reason Age From Message

—- —— —- —- ——-

Warning Unhealthy 23m (x189 over 16h) kubelet Liveness probe failed: grpc implementation: @grpc/grpc-js

Warning BackOff 3m25s (x5270 over 16h) kubelet Back-off restarting failed container

NFS controller:

.Name: zfs-nfs-democratic-csi-controller-df5866c7d-bn5rn

Namespace: democratic-csi

Priority: 0

Service Account: zfs-nfs-democratic-csi-controller-sa

Node: k8s-worker2.virtual.lab/192.168.12.14

Start Time: Mon, 09 Oct 2023 15:48:56 -0400

Labels: app.kubernetes.io/component=controller-linux

app.kubernetes.io/csi-role=controller

app.kubernetes.io/instance=zfs-nfs

app.kubernetes.io/managed-by=Helm

app.kubernetes.io/name=democratic-csi

pod-template-hash=df5866c7d

Annotations: checksum/configmap: c8ee71c0aa17b0c84a0ca627cdb14f3aade323354081b870cb56f09293d03635

checksum/secret: 53183c5ecc7ed3a00e1b18e7e1cb47b1bab449efde5dc43d72c80b8f8247b549

Status: Pending

IP:

IPs:

Controlled By: ReplicaSet/zfs-nfs-democratic-csi-controller-df5866c7d

Containers:

external-attacher:

Container ID:

Image: registry.k8s.io/sig-storage/csi-attacher:v4.3.0

Image ID:

Port:

Host Port:

Args:

–v=5

–leader-election

–leader-election-namespace=democratic-csi

–timeout=90s

–worker-threads=10

–csi-address=/csi-data/csi.sock

State: Waiting

Reason: ContainerCreating

Ready: False

Restart Count: 0

Environment:

…..

….

….

Type Reason Age From Message

—- —— —- —- ——-

Warning FailedCreatePodSandBox 15s (x1248 over 4h33m) kubelet (combined from similar events): Failed to create pod sandbox: rpc error: code = Unknown desc = failed to setup network for sandbox “31aa5055e163132261216f383b70cfbcfc95a4f4db465428438576ba29e41fd0″: plugin type=”calico” failed (add): error getting ClusterInformation: Get “https://10.96.0.1:443/apis/crd.projectcalico.org/v1/clusterinformations/default”: x509: certificate signed by unknown authority (possibly because of “crypto/rsa: verification error” while trying to verify candidate authority certificate “kubernetes”)

This is a cause for concern:

Have you installed, then reset, and then re-installed your Kubernetes cluster by any chance?

Could you post the output of

kubectl get no -o wideplease?NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master.virtual.lab Ready control-plane 62d v1.25.3 192.168.12.12 Rocky Linux 8.6 (Green Obsidian) 4.18.0-372.26.1.el8_6.x86_64 containerd://1.6.8

k8s-worker1.virtual.lab Ready 62d v1.25.3 192.168.12.13 Rocky Linux 8.6 (Green Obsidian) 4.18.0-372.26.1.el8_6.x86_64 containerd://1.6.8

k8s-worker2.virtual.lab Ready 62d v1.25.3 192.168.12.14 Rocky Linux 8.6 (Green Obsidian) 4.18.0-372.26.1.el8_6.x86_64 containerd://1.6.8

k8s-worker3.virtual.lab Ready 62d v1.25.3 192.168.12.15 Rocky Linux 8.6 (Green Obsidian) 4.18.0-372.26.1.el8_6.x86_64 containerd://1.6.8

There is a recent breaking change in democratic-csi, datasetPermissionsUser & datasetPermissionsGroup must be numeric now… in case someone ran into issues later.

Thanks Oliver, appreciate it.